Hi!

My question has to do with my misunderstanding with Gambler's Fallacy and probability distribution. My university knowledge on probability and statistical analysis has faded quite a bit, mostly due to the difficulty I have with understanding some of the concepts I faced there, so please be gentle with me!

Within video games and in gambling, I've always had a preconceived idea that I later found out was called Gambler's Fallacy. For example, in a coin toss scenario, if I toss 3 heads in a row, I would *feel* as though there is a higher chance that I will then toss a tail. Another example would be in a video game like League of Legends, where a character with 50% critical strike chance can attack something without crit-ting (by chance) and so one may believe that there is a higher chance to crit on their next attack. Another real-life example would be in slot machines, where a player may bet on Red because he has seen Blacks go past 4 times in a row in previous turns. And finally, a funny joke that I read included a man who was afraid of bombs in his airplane flight, and so he brought his own bomb to his flight because surely the probability of having two bombs on the plane is way less than having one!

So my initial question is, why isn't the history of these events affecting the chance of what's coming next?

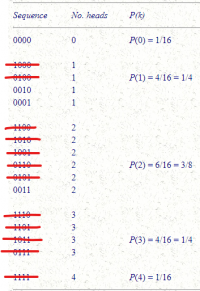

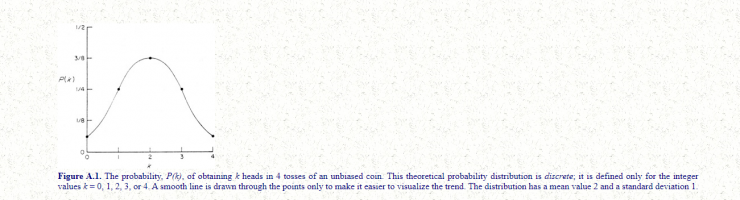

Now I understand that in the coin toss example, although I *feel* as though there is a greater chance to toss a tail, I know that the chance is 50%. No matter how many heads in a row I get, on my next turn, the chance to get a heads or tails will still be 50%. But, I also know that the probability distribution of this scenario looks like this (taken from: https://ned.ipac.caltech.edu/level5/Berg/Berg2.html) :

What I get from this graph is that there is a higher chance to get 2 heads out of 4 tosses compared to every other situation (like 1 heads in 4 tosses, or 0 heads). So, if I was to get 2 tails in a row, surely I should be able to expect a higher chance to get at least 1 head in the last 2 tosses because the number of events getting at least 1 heads is greater than the number of events getting 0 heads.

After rolling 2 tails, P(2 heads) + P(1 heads) = 2/16 + 1/16 = 3/16

Compared with no heads: P(0 heads) = 1/16

As I'm writing this and recollecting my thoughts, I'm catching a glimpse of what I may be misunderstanding. Perhaps the Gambler's Fallacy has to do with things inside an event (on a microscale), whilst the probability distribution is more about the placement of the events (on a macroscale)? For example, there's a low chance for us to be born in 1st world countries (probability distribution), but the chance of a new quiet baby is not affected by how many crying babies in a row are introduced (fallacy)?

Sorry if I may not have expressed myself well in that last paragraph. Anyhow, I want to know your explanations and I want to deepen my knowledge on this matter.

So, my final question is: what am I misunderstanding?

My question has to do with my misunderstanding with Gambler's Fallacy and probability distribution. My university knowledge on probability and statistical analysis has faded quite a bit, mostly due to the difficulty I have with understanding some of the concepts I faced there, so please be gentle with me!

Within video games and in gambling, I've always had a preconceived idea that I later found out was called Gambler's Fallacy. For example, in a coin toss scenario, if I toss 3 heads in a row, I would *feel* as though there is a higher chance that I will then toss a tail. Another example would be in a video game like League of Legends, where a character with 50% critical strike chance can attack something without crit-ting (by chance) and so one may believe that there is a higher chance to crit on their next attack. Another real-life example would be in slot machines, where a player may bet on Red because he has seen Blacks go past 4 times in a row in previous turns. And finally, a funny joke that I read included a man who was afraid of bombs in his airplane flight, and so he brought his own bomb to his flight because surely the probability of having two bombs on the plane is way less than having one!

So my initial question is, why isn't the history of these events affecting the chance of what's coming next?

Now I understand that in the coin toss example, although I *feel* as though there is a greater chance to toss a tail, I know that the chance is 50%. No matter how many heads in a row I get, on my next turn, the chance to get a heads or tails will still be 50%. But, I also know that the probability distribution of this scenario looks like this (taken from: https://ned.ipac.caltech.edu/level5/Berg/Berg2.html) :

What I get from this graph is that there is a higher chance to get 2 heads out of 4 tosses compared to every other situation (like 1 heads in 4 tosses, or 0 heads). So, if I was to get 2 tails in a row, surely I should be able to expect a higher chance to get at least 1 head in the last 2 tosses because the number of events getting at least 1 heads is greater than the number of events getting 0 heads.

After rolling 2 tails, P(2 heads) + P(1 heads) = 2/16 + 1/16 = 3/16

Compared with no heads: P(0 heads) = 1/16

As I'm writing this and recollecting my thoughts, I'm catching a glimpse of what I may be misunderstanding. Perhaps the Gambler's Fallacy has to do with things inside an event (on a microscale), whilst the probability distribution is more about the placement of the events (on a macroscale)? For example, there's a low chance for us to be born in 1st world countries (probability distribution), but the chance of a new quiet baby is not affected by how many crying babies in a row are introduced (fallacy)?

Sorry if I may not have expressed myself well in that last paragraph. Anyhow, I want to know your explanations and I want to deepen my knowledge on this matter.

So, my final question is: what am I misunderstanding?