Brotherbobby

New member

- Joined

- Jul 10, 2019

- Messages

- 1

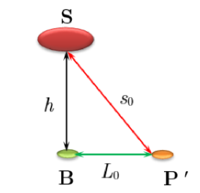

Statement of the Problem : A satellite located at a height [MATH]h[/MATH] from the base B sends radio signals to your place P. These signals give us the distance [MATH]s[/MATH] to your place up to a certain measurement error [MATH]\delta s[/MATH]. Your place P is located at a distance [MATH]L[/MATH] along the earth, as shown in the diagram above. Use the methods of differential calculus to calculate [MATH]L[/MATH] to a first approximation.

Solution : I Imagine a simplified triangular image of the three points as shown above. The distance BP' = [MATH]L_0 = \sqrt{s_0^2 - h^2}[/MATH]. The original distance [MATH]L = L_0 +\delta L \approx L_0 + \left( \frac{dL}{ds}\right)_{s_0}\delta s [/MATH].

From the triangle above, using the methods of trigonometry and calculus, we get [MATH]\frac{dL}{ds} = \frac{s}{L}[/MATH] and hence [MATH]\left( \frac{dL}{ds}\right )_{s_0} = \frac{s_0}{L_0} \text{, hence the original distance}\; L = L_0 + \frac{s_0}{L_0} \delta s \Rightarrow \boxed{L = L_0\left( 1+\frac{s_0}{L_0^2}\delta s \right)}[/MATH].

The satellite gives the error in the measurement of [MATH]s[/MATH] which is [MATH]\delta s[/MATH] and so the distance L can be found.

Is my working correct?