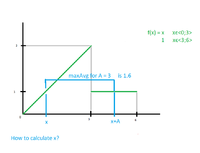

I have (possibly discontinuous) function f(x) defined on continuous intervals. Given segment length A, I am looking for interval <x;x+A> where maximum average value of the function is the largest.

Lets say function f(x) = x for x in <0;3> and f(x) = 1 for x in <3;6>. Value of the function is not defined outside of these intervals.

A is always less than difference between highest and lowest possible value of x (A < 6).

I know I can get average value between x and x+A by integrating the function by parts and dividing it by length of individual part. But I have no idea how to find value of x where such averege value would be largest.

For now I am using "dumb" solution by splitting the whole interval of definition values to segments of length A with step A/10 and calculating integral in each position and remembering where the value was highest. The solution is really slow and sometimes inaccurate.

Is there a way to actually calculate this numericaly?

Lets say function f(x) = x for x in <0;3> and f(x) = 1 for x in <3;6>. Value of the function is not defined outside of these intervals.

A is always less than difference between highest and lowest possible value of x (A < 6).

I know I can get average value between x and x+A by integrating the function by parts and dividing it by length of individual part. But I have no idea how to find value of x where such averege value would be largest.

For now I am using "dumb" solution by splitting the whole interval of definition values to segments of length A with step A/10 and calculating integral in each position and remembering where the value was highest. The solution is really slow and sometimes inaccurate.

Is there a way to actually calculate this numericaly?