Hi all.

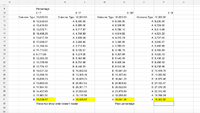

There is a starting total value of 10,000 and a series of random calculations that affect this running total.

A fixed percentage of the total value is used for each series of calculations with two possible outcomes, each with an equal opportunity of occurring (50/50):

One outcome is to multiply the total value by the percentage and subtract that amount from the total value to grant a new total value for the next calculation.

The other outcome is to multiply the total value by the percentage and then by 1.5 and then add that to the total value to grant a new total value for the next calculation.

The series of calculations are truly played out randomly, but if we were to argue that after 100 runs, there would be exactly 50 of both types of outcomes, then based on the percentage used, a final outcome can be obtained and compared to running the series over again with a different percentage.

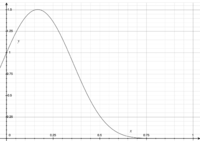

My question is: why isn’t there a linear outcome (ie greater percentage used grants a greater total outcome)? For me, there seem to be greater total outcomes as you increase the percentage from 0.001, and then it peaks at a certain percentage, and then begins to diminish the total value when using even greater percentages than that peak percentage. The question is, why is this so, instead of linear?

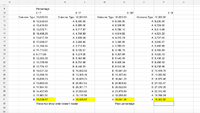

There is a starting total value of 10,000 and a series of random calculations that affect this running total.

A fixed percentage of the total value is used for each series of calculations with two possible outcomes, each with an equal opportunity of occurring (50/50):

One outcome is to multiply the total value by the percentage and subtract that amount from the total value to grant a new total value for the next calculation.

The other outcome is to multiply the total value by the percentage and then by 1.5 and then add that to the total value to grant a new total value for the next calculation.

The series of calculations are truly played out randomly, but if we were to argue that after 100 runs, there would be exactly 50 of both types of outcomes, then based on the percentage used, a final outcome can be obtained and compared to running the series over again with a different percentage.

My question is: why isn’t there a linear outcome (ie greater percentage used grants a greater total outcome)? For me, there seem to be greater total outcomes as you increase the percentage from 0.001, and then it peaks at a certain percentage, and then begins to diminish the total value when using even greater percentages than that peak percentage. The question is, why is this so, instead of linear?